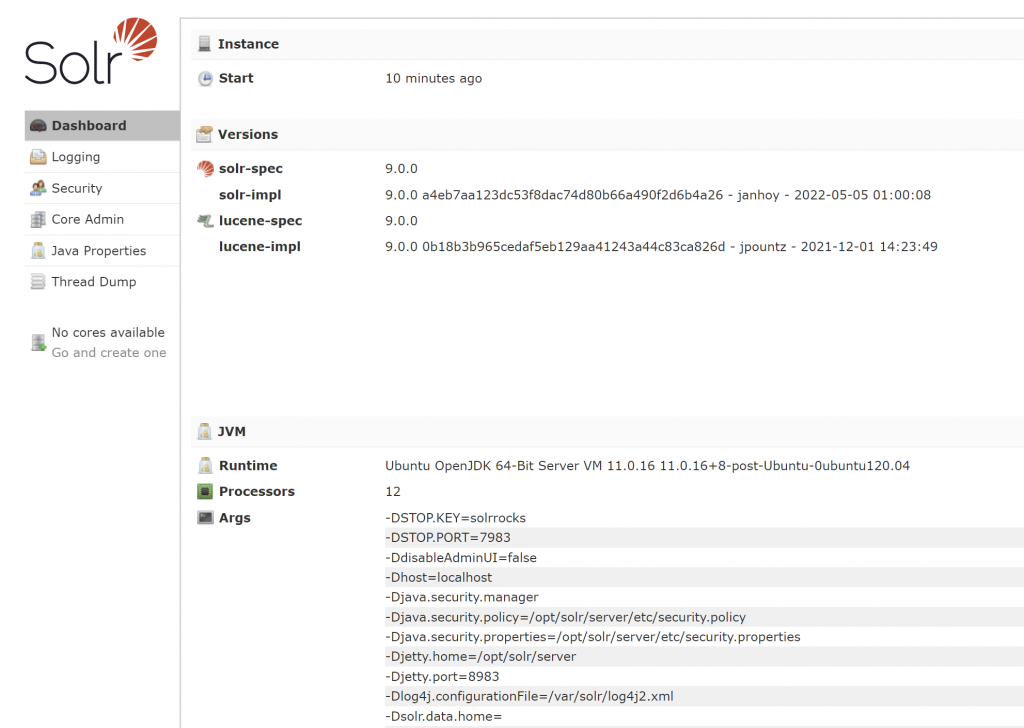

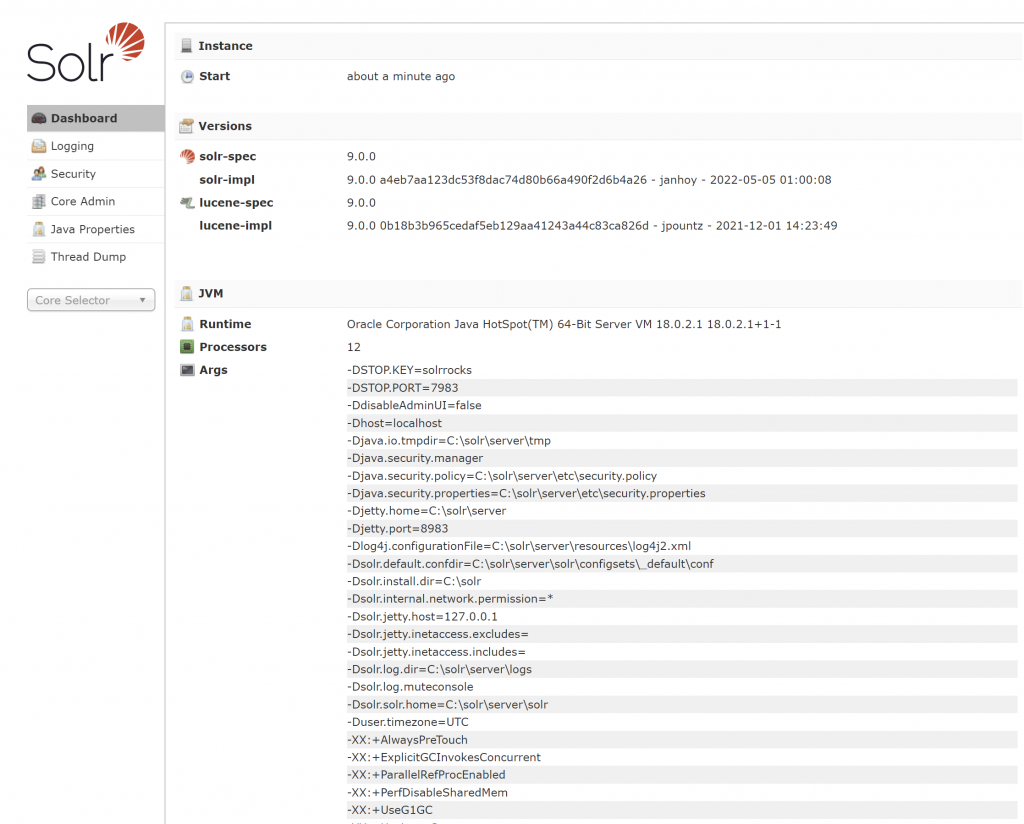

SolrNet – is a .Net based library for interacting with Solr using C#.

Solr is a full-text engine server built on top of Apache Lucene. Apache Lucene is a full-text engine.

SolrNet is a C# library for easily generating the REST calls for interacting with Solr server.

One of the most important class is the QueryOptions class. The QueryOptions class allows to specify several options and probably some options need own blog posts.

For paging the results, the following options can be used:

var pageNumber = 2;

var options = new QueryOptions()

{

Rows = 10,

StartOrCursor = new StartOrCursor.Start((pageNumber - 1) * 10)

};The above code shows getting 10 results, starting from the 11th. The pageNumbers variable was 2, so (pageNumber – 1) * 10 would mean 10. The default 0 i.e from the beginning.

Another useful option is specifying the Fields to retrieve. Think of this like specifying the columns to retrieve in SQL statement instead of all i.e SELECT col1, col2 instead of SELECT *.

var options = new QueryOptions()

{

Fields = new[] { "col1", "col2" }

};I am hoping this blog post helps someone.

BTW, LMAO! Funny seeing little scumbags of planet earth using some powerful spying equipment and they trying to pass commands. The scumbags/pests/leeches and sl*ts with the equipment have false prestige and false propaganda.

–

Mr. Kanti Kalyan Arumilli

B.Tech, M.B.A

Founder & CEO, Lead Full-Stack .Net developer

ALight Technology And Services Limited

Phone / SMS / WhatsApp on the following 3 numbers:

+91-789-362-6688, +1-480-347-6849, +44-07718-273-964

kantikalyan@gmail.com, kantikalyan@outlook.com, admin@alightservices.com, kantikalyan.arumilli@alightservices.com, KArumilli2020@student.hult.edu, KantiKArumilli@outlook.com and 3 more rarely used email addresses – hardly once or twice a year.